mirror of

https://github.com/actions/toolkit

synced 2025-05-09 00:22:56 +00:00

[Artifacts] Prepare for v2.0.0 of @actions/artifact (#1479)

* Prepare for v2.0.0 of @actions/artifact * Run prettier * temporary disable unused vars

This commit is contained in:

parent

91d3933eb5

commit

c4f5ce2665

44 changed files with 132 additions and 5896 deletions

|

|

@ -1,53 +0,0 @@

|

|||

# Additional Information

|

||||

|

||||

Extra information

|

||||

- [Non-Supported Characters](#Non-Supported-Characters)

|

||||

- [Permission loss](#Permission-Loss)

|

||||

- [Considerations](#Considerations)

|

||||

- [Compression](#Is-my-artifact-compressed)

|

||||

|

||||

## Non-Supported Characters

|

||||

|

||||

When uploading an artifact, the inputted `name` parameter along with the files specified in `files` cannot contain any of the following characters. They will be rejected by the server if attempted to be sent over and the upload will fail. These characters are not allowed due to limitations and restrictions with certain file systems such as NTFS. To maintain platform-agnostic behavior, all characters that are not supported by an individual filesystem/platform will not be supported on all filesystems/platforms.

|

||||

|

||||

- "

|

||||

- :

|

||||

- <

|

||||

- \>

|

||||

- |

|

||||

- \*

|

||||

- ?

|

||||

|

||||

In addition to the aforementioned characters, the inputted `name` also cannot include the following

|

||||

- \

|

||||

- /

|

||||

|

||||

|

||||

## Permission Loss

|

||||

|

||||

File permissions are not maintained between uploaded and downloaded artifacts. If file permissions are something that need to be maintained (such as an executable), consider archiving all of the files using something like `tar` and then uploading the single archive. After downloading the artifact, you can `un-tar` the individual file and permissions will be preserved.

|

||||

|

||||

```js

|

||||

const artifact = require('@actions/artifact');

|

||||

const artifactClient = artifact.create()

|

||||

const artifactName = 'my-artifact';

|

||||

const files = [

|

||||

'/home/user/files/plz-upload/my-archive.tgz',

|

||||

]

|

||||

const rootDirectory = '/home/user/files/plz-upload'

|

||||

const uploadResult = await artifactClient.uploadArtifact(artifactName, files, rootDirectory)

|

||||

```

|

||||

|

||||

## Considerations

|

||||

|

||||

During upload, each file is uploaded concurrently in 4MB chunks using a separate HTTPS connection per file. Chunked uploads are used so that in the event of a failure (which is entirely possible because the internet is not perfect), the upload can be retried. If there is an error, a retry will be attempted after a certain period of time.

|

||||

|

||||

Uploading will be generally be faster if there are fewer files that are larger in size vs if there are lots of smaller files. Depending on the types and quantities of files being uploaded, it might be beneficial to separately compress and archive everything into a single archive (using something like `tar` or `zip`) before starting and artifact upload to speed things up.

|

||||

|

||||

## Is my artifact compressed?

|

||||

|

||||

GZip is used internally to compress individual files before starting an upload. Compression helps reduce the total amount of data that must be uploaded and stored while helping to speed up uploads (this performance benefit is significant especially on self hosted runners). If GZip does not reduce the size of the file that is being uploaded, the original file is uploaded as-is.

|

||||

|

||||

Compression using GZip also helps speed up artifact download as part of a workflow. Header information is used to determine if an individual file was uploaded using GZip and if necessary, decompression is used.

|

||||

|

||||

When downloading an artifact from the GitHub UI (this differs from downloading an artifact during a workflow), a single Zip file is dynamically created that contains all of the files uploaded as part of an artifact. Any files that were uploaded using GZip will be decompressed on the server before being added to the Zip file with the remaining files.

|

||||

1

packages/artifact/docs/docs.md

Normal file

1

packages/artifact/docs/docs.md

Normal file

|

|

@ -0,0 +1 @@

|

|||

Docs will be added here once development of version `2.0.0` has finished

|

||||

|

|

@ -1,57 +0,0 @@

|

|||

# Implementation Details

|

||||

|

||||

Warning: Implementation details may change at any time without notice. This is meant to serve as a reference to help users understand the package.

|

||||

|

||||

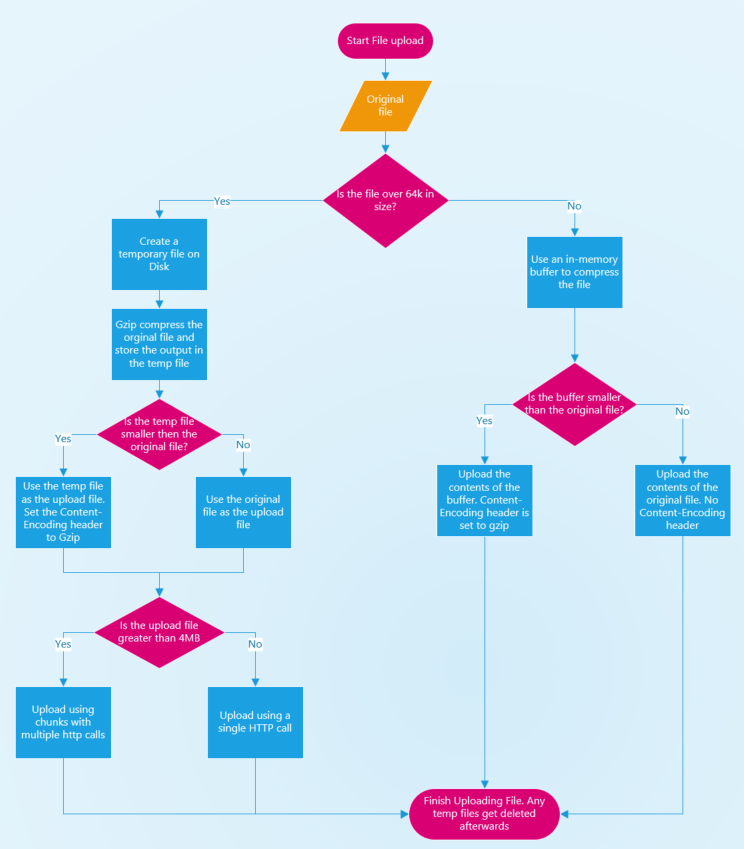

## Upload/Compression flow

|

||||

|

||||

|

||||

|

||||

During artifact upload, gzip is used to compress individual files that then get uploaded. This is used to minimize the amount of data that gets uploaded which reduces the total amount of HTTP calls (upload happens in 4MB chunks). This results in considerably faster uploads with huge performance implications especially on self-hosted runners.

|

||||

|

||||

If a file is less than 64KB in size, a passthrough stream (readable and writable) is used to convert an in-memory buffer into a readable stream without any extra streams or pipping.

|

||||

|

||||

## Retry Logic when downloading an individual file

|

||||

|

||||

|

||||

|

||||

## Proxy support

|

||||

|

||||

This package uses the `@actions/http-client` NPM package internally which supports proxied requests out of the box.

|

||||

|

||||

## HttpManager

|

||||

|

||||

### `keep-alive` header

|

||||

|

||||

When an HTTP call is made to upload or download an individual file, the server will close the HTTP connection after the upload/download is complete and respond with a header indicating `Connection: close`.

|

||||

|

||||

[HTTP closed connection header information](https://tools.ietf.org/html/rfc2616#section-14.10)

|

||||

|

||||

TCP connections are sometimes not immediately closed by the node client (Windows might hold on to the port for an extra period of time before actually releasing it for example) and a large amount of closed connections can cause port exhaustion before ports get released and are available again.

|

||||

|

||||

VMs hosted by GitHub Actions have 1024 available ports so uploading 1000+ files very quickly can cause port exhaustion if connections get closed immediately. This can start to cause strange undefined behavior and timeouts.

|

||||

|

||||

In order for connections to not close immediately, the `keep-alive` header is used to indicate to the server that the connection should stay open. If a `keep-alive` header is used, the connection needs to be disposed of by calling `dispose()` in the `HttpClient`.

|

||||

|

||||

[`keep-alive` header information](https://developer.mozilla.org/en-US/docs/Web/HTTP/Headers/Keep-Alive)

|

||||

[@actions/http-client client disposal](https://github.com/actions/http-client/blob/04e5ad73cd3fd1f5610a32116b0759eddf6570d2/index.ts#L292)

|

||||

|

||||

|

||||

### Multiple HTTP clients

|

||||

|

||||

During an artifact upload or download, files are concurrently uploaded or downloaded using `async/await`. When an error or retry is encountered, the `HttpClient` that made a call is disposed of and a new one is created. If a single `HttpClient` was used for all HTTP calls and it had to be disposed, it could inadvertently effect any other calls that could be concurrently happening.

|

||||

|

||||

Any other concurrent uploads or downloads should be left untouched. Because of this, each concurrent upload or download gets its own `HttpClient`. The `http-manager` is used to manage all available clients and each concurrent upload or download maintains a `httpClientIndex` that keep track of which client should be used (and potentially disposed and recycled if necessary)

|

||||

|

||||

### Potential resource leaks

|

||||

|

||||

When an HTTP response is received, it consists of two parts

|

||||

- `message`

|

||||

- `body`

|

||||

|

||||

The `message` contains information such as the response code and header information and it is available immediately. The body however is not available immediately and it can be read by calling `await response.readBody()`.

|

||||

|

||||

TCP connections consist of an input and output buffer to manage what is sent and received across a connection. If the body is not read (even if its contents are not needed) the buffers can stay in use even after `dispose()` gets called on the `HttpClient`. The buffers get released automatically after a certain period of time, but in order for them to be explicitly cleared, `readBody()` is always called.

|

||||

|

||||

### Non Concurrent calls

|

||||

|

||||

Both `upload-http-client` and `download-http-client` do not instantiate or create any HTTP clients (the `HttpManager` has that responsibility). If an HTTP call has to be made that does not require the `keep-alive` header (such as when calling `listArtifacts` or `patchArtifactSize`), the first `HttpClient` in the `HttpManager` is used. The number of available clients is equal to the upload or download concurrency and there will always be at least one available.

|

||||

Loading…

Add table

Add a link

Reference in a new issue